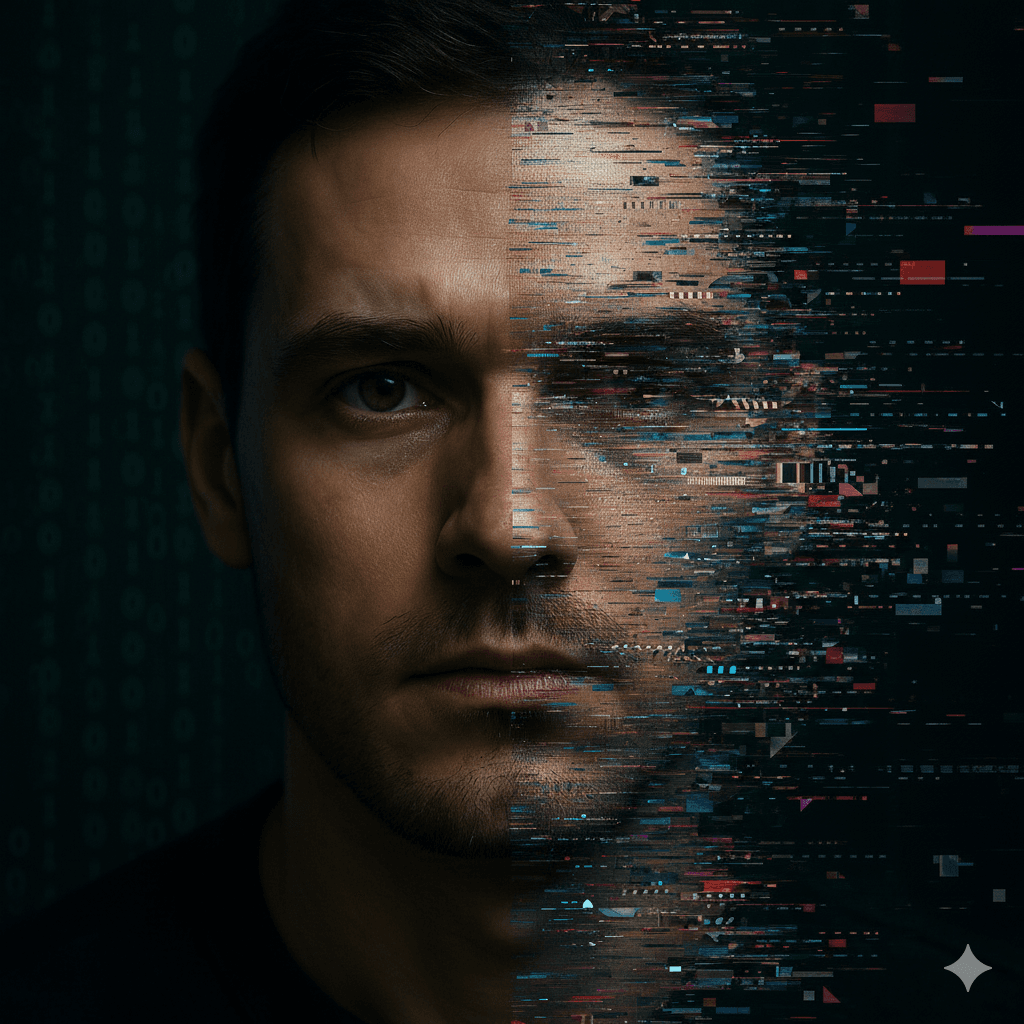

In an era dominated by digital content, deepfakes and misinformation are emerging as one of the most serious threats enabled by artificial intelligence. These manipulated audio, image, and video media can convincingly impersonate real people, spread false narratives, and undermine public trust. Understanding how deepfakes work, their consequences, and how to guard against them is essential in protecting the information ecosystem.

What Are Deepfakes and Why They Matter

- Deepfakes are synthetic media created using AI—especially techniques like Generative Adversarial Networks (GANs)—to produce highly realistic but manipulated content.

- They include fake videos, audios, and images where someone’s face or voice is replaced or manipulated to say or do something they never did.

- As AI advances, the realism of deepfakes has increased, making them harder to detect and more dangerous.

How Deepfakes Fuel Misinformation

1. Political Manipulation

Deepfakes have been used to sway public opinion during elections by misrepresenting statements of politicians or creating fake events. India’s Deepfakes Analysis Unit (DAU) has dealt with many audio and video files flagged for misrepresentation during recent elections.

2. Fraud & Impersonation

Fraudsters use audio deepfakes to impersonate individuals, such as CEOs or public figures, to extract money or sensitive data.

3. Reputation Damage & Defamation

Deepfake visuals of celebrities or everyday people have been used to create misleading or false content, harming their reputation and causing emotional distress.

4. Erosion of Trust in Media

When people can’t tell what’s real or fake, the trust in legitimate news sources suffers. This erodes the credibility of factual reporting. Platforms and audiences both find themselves questioning everything.

Key Techniques & Technologies Behind Deepfakes

- Generative Adversarial Networks (GANs): The core technology powering many deepfakes. A generator network creates fake content, while a discriminator tries to distinguish fake from real. Over time, both improve.

- Voice Cloning & Audio Deepfakes: Using small audio samples, AI can mimic someone’s voice convincingly. These are used in scams and impersonation.

- Synthetic Media Platforms: As tools become more accessible (for example, open-source or low-cost AI tools), more people can potentially generate deepfakes without high technical skills.

Real-World Cases and Statistics

- In India, many people struggle to distinguish between real and manipulated content shared on social media. Debunking deepfakes is sometimes delayed due to lack of original content.

- The UN’s ITU has raised alarms over how deepfake videos and images have potential to influence elections and financial fraud globally.

- Several countries are implementing legal and regulatory steps. Denmark, for instance, is working on making the distribution of deepfake content illegal, citing risks to misinformation.

Challenges in Detecting Deepfakes

- Technical Limitations: Even the best detection algorithms have false negatives. Some deepfakes are high-quality enough to evade detection.

- Rapid Evolution: As detection improves, generation techniques evolve faster, creating a kind of arms race.

- Lack of Awareness: Many people don’t know how to verify media or suspect deepfakes. Misleading content gets shared before being debunked.

What Can Be Done: Defense & Mitigation

1. Technology Solutions

- Watermarking and metadata tagging for original content.

- Advanced detection tools that use machine learning to spot anomalies.

- Multi-modal detection: combining audio, video, and image for verification.

2. Regulatory Measures & Policy

- Laws and policies to penalize non-consensual deepfakes and restrict malicious use.

- Platform accountability: social networks should enforce stricter controls and content verification.

3. Public Awareness & Media Literacy

- Educating users on spotting signs of deepfakes (e.g. mismatched lip sync, odd facial expressions, lighting issues).

- Encouraging fact-checking and verifying sources before sharing.

4. Collaboration Across Stakeholders

- Governments, tech companies, NGOs, and research institutions need to work together.

- Example: India’s DAU under the Misinformation Combat Alliance allows public reporting of suspicious content via WhatsApp.

Conclusion

Deepfakes represent a dark side of AI—one that threatens truth, trust, and societal stability. However, by combining technology, regulation, and informed citizens, we can mitigate these risks. The future depends on not just innovation, but responsible use of AI.

Let’s stay vigilant, demand transparency, and insist that digital media ethics keep pace with technological progress.